My AI Co‑Pilot is brilliant, but it can't lead. Here's the method I use.

I have been watching the developments in the recent flurry of AI announcements, and it only makes me believe that human-centered leadership is needed more than ever. In the last few weeks alone, we’ve seen one announcement after another, such as Anthropic’s Claude 4.6 lineup and OpenAI’s GPT-5.3-Codex, all of which are beginning to bring the execution of complex autonomous workflows to AI. We are seeing the technology sector experience a massive market correction, wiping out $2 trillion in software investments over the last few months as investors are trying to come to terms with the massive infrastructure investments required and the harsh reality of the tools that are now starting to emerge.

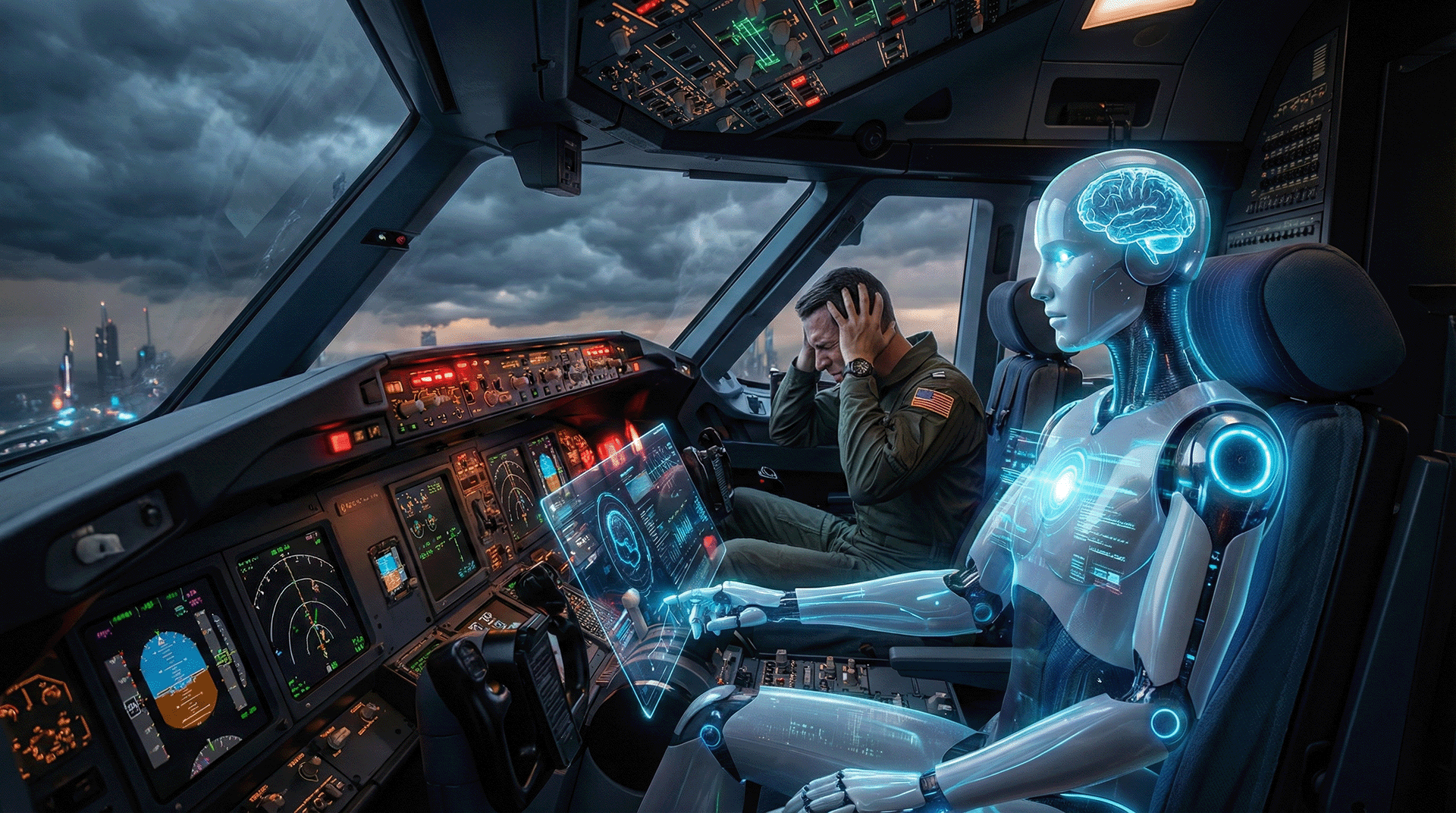

While the technology is advancing at an unprecedented rate, throwing a powerful but still somewhat unpredictable AI co-pilot at a clogged-up workforce is no recipe for success; there’s too much turbulence to navigate without enough human judgment to steer through it.

So many of the technology leaders we have today are no doubt wrestling with the same issues. We have more AI tools than we know what to do with, but leaders are in shambles. DDI’s research from just last month found that 71 % of leaders are more stressed than ever, and an astonishing 40 % are thinking about quitting.

Read more at:

- Leadership Trends 2026: What's Next for Leaders and Organizations

- Four Leadership Trends That Can't Be Ignored - UW–Madison

Hype cycles? Yeah, been around long enough to spot them. Something about this AI thing sits differently in the gut, though. Not merely another trend fading fast into history.

Lately, that extra screen has turned into more than just a productivity trick - it’s started feeling like another kind of teammate now. People even say that it’s what they call “Parallel Intelligence.” What counts as “intelligence” often just means figuring out how to operate next to these tools, blending our judgment with their processing power, as shown in these pieces:

- Parallel Intelligence: The Leadership Skill No One's Talking About …

- Embracing AI in Leadership: Why “Parallel Intelligence” Is the New ...

Here lies the issue - this smart co‑pilot knows tricks nobody else does, yet feels completely lost without instinct. It might tweak processes until they run perfectly, still falls short where gut calls matter. Trust grows slowly. It processes numbers fast, yet still misses body language. From what I’ve seen, success now depends on both skills. What matters most isn’t turning into a skilled prompt engineer. Instead, focus tightens on building the abilities that shaped your work before: Effective leadership.

This shapes how I approach each idea. Five elements hold that process together - Connection, then Conscience, followed by Creativity, Clarity, and Curiosity. Starting here is how I actually use AI’s strength - by blending purpose with practical steps. This approach turns machine output into something useful for people leading others.

Subscribe to this blog to get answers from an AI agent. Use the button above.

The Co‑Pilot in the Room: Acknowledging a Stressed‑Out Cockpit

We can’t just hand out a stressed‑out leader a powerful new AI tool and hope for the best.

Before we get into solutions, let’s acknowledge the challenge. Leading a team is tough. 77% of CHROs are not confident in their leadership bench, according to the same DDI report. Leaders are being asked to fly the plane while upgrading its engines. Handing a powerful but unpredictable AI co-pilot at the controls without a new operating manual may not be the best approach. This is where a proper framework becomes more than a “nice to have” - it’s a pre-flight checklist.

The 5 Cs: My Preferred Method for Human‑AI Partnership

This isn't about prompt engineering; it’s about leading humans who use AI.

This framework is becoming the backbone of my strategy. It’s how I think about ensuring we are the ones guiding the technology, not the other way around.

Connection: Keeping the Human in the Loop

AI gives you the “what”; your job is to ask “how” and “why”.

AI can be great at optimizing communication: scheduling, summarizing, and drafting emails. But efficiency is not the same as empathy. For me, Connection is about using the data as a starting point, not a conclusion.

For example, an AI can run a sentiment analysis on team chats and tell you, the leader, that the mood is stressed. That should be your cue to make a point of discussing this during your next 1:1 touchbases and Team meetings. The data suggests things are tense. But you need to understand what’s really going on.

Conscience: Our Ethical Rudder

Treating AI ethics as an afterthought is no longer just bad practice; it's a business risk.

An AI model trained on the public internet reflects all of its biases. Conscience is the non‑negotiable job of acting as an ethical filter. When your teams evaluate a new AI tool, model, or tech-stack, encourage them to run AI “Pre‑Mortems.” They should ask, “What’s the most likely way this could produce a biased or unfair outcome for our customers or our own people?” With regulations like the EU AI Act coming online, this is now a matter of compliance. The Act deems many HR tools “high‑risk” and legally requires human oversight (EU AI Act HR Compliance: How HR Can Prepare). Conscience is our guide to meeting that standard.

Creativity: The Art of the Remix

AI gives you the dots; your job is to connect them into something no one has seen before.

AI is a pattern‑recognition machine. It can analyze huge amounts of data and tell you what’s happening. What it can’t do (at least not yet) is come up with a truly original idea for what to do next. That’s where human creativity comes in. I use AI to identify trends, then discuss them with my team. It’s our job as humans to brainstorm the disruptive applications. AI provides the pieces; our creativity puts them together in new ways.

Clarity: The Master Storyteller

If you can't explain an AI insight in 30 seconds, it has no business value.

AI speaks the language of probabilities and data sets. Business speaks the language of vision and narratives. A leader has to translate. Clarity is defining an AI insight that is a thousand words thick down to a single sentence that a non-technical decision maker can grasp and act upon. If there’s no soundbite explaining why an AI recommendation is in the interests of the business, there’s no value in implementing it.

Curiosity: The Fuel for Relevance

In the age of AI, standing still is the fastest way to become irrelevant.

What was cutting‑edge in AI six months ago is already old news. The only way to keep up is to stay curious. I’ve started a habit of blocking out a “Curiosity Hour” each weekend. The only rule is to play with a new tool or prompting technique that has nothing to do with my current projects. It’s not about staying busy; it’s about following a culture of discovery that keeps me from falling behind.

From Buzzwords to Business Case: The Data Doesn’t Lie

Mastering the human side of AI isn’t a soft skill, it’s a direct driver of productivity and profit.

This framework isn’t just about feeling good; it’s about better performance. The numbers are starting to prove it. PwC’s 2024 Global AI Jobs Barometer found that industries with high AI exposure are seeing nearly five times the growth in labor productivity. And jobs that require specialized AI skills are commanding wage premiums up to 25 % (PwC 2024 Global AI Jobs Barometer | PwC). I believe leaders who master these 5 Cs are the ones who will unlock this value. They will build teams that attract the best talent and use AI to multiply their impact, not just automate their tasks.

The Reality Check: This Isn’t a Silver Bullet

A good framework is a start, but we have to be realistic about the organizational shifts required.

As much as I stand by this framework, I'm a pragmatist. I know it's not a magic fix. Both research and my own observations point to a few hard realities we need to face.

The 5 Cs Are Not Enough

- Individual skill is a starting point, but it’s not sufficient. Research from MIT and BCG suggests that to succeed, companies need a culture of “augmented learning,” in which the whole organization learns to use these new tools together. See Learning to Manage Uncertainty, With AI – MIT SMR + BCG

The “Senior Trap”

- There’s a dangerous assumption that junior, digital‑native employees will naturally teach senior leaders. Junior staff often lack the deep strategic context to guide senior decisions.

The Co‑Pilot vs. The Air Traffic Controller

- An old McKinsey report (old, but still relevant) shows that most companies use AI in siloed functions rather than across the board. This means a leader’s job is often less about being a co‑pilot and more like being an air traffic controller, making sure different AI‑powered projects don’t collide. See The state of AI in 2023: Generative AI’s breakout year | McKinsey

Conclusion: From Tool to Teammate

The goal isn’t to become a master of AI; it’s to become a better leader of people who use AI.

The AI era is here. Fighting it is pointless, and blindly adopting it is reckless. The only choice is to lead it with intention and a strong dose of practical wisdom.

By focusing on the 5 Cs, I’ve started to see AI less as a tool to be managed and more as a teammate to be coached. A brilliant, fast, and sometimes frustratingly literal teammate, but a teammate nonetheless. I believe this partnership, this “Parallel Intelligence,” is the future of high‑impact leadership.

My recommendation is to start today:

- Self‑Audit: Where are you strongest and weakest across the 5 Cs?

- Start Small: Pick one practice, like the “Curiosity Hour,” and try it this month.

- Find Your People: Start a small group with a few peers to share what you’re learning, both the successes and the failures.

When we combine our human judgment with machine speed, we do more than just survive the AI wave. We lead it.

Comments ()