The Sleepless Engineer: What Andrej Karpathy's autoresearch Really Signals

A lot of people are asking the wrong question.

Will AI kick out your best researcher? Nope. Wrong idea entirely. Don't even think like that. The actual mystery, though, is this: What specific research tasks got so incredibly cheap that machines could just take them over? And when these chores are handled by algorithms, what then happens to a really sharp engineering outfit?

Andrej Karpathy's open-source project, autoresearch, is a really big deal. Seriously. It absolutely does not mean AI scientists have achieved some final state of enlightenment, though. They haven't. Not even close! What makes the whole thing so pivotal is the clear, practical demonstration of how an AI system can genuinely do stuff. It runs tests relentlessly, day and night. PyTorch code? That gets fiddled with constantly. New ideas are given a shot, often on a shoestring budget. Anything useful? That stays. The rest is simply tossed.

This morning’s obligatory “AI breakthrough of the century” story inevitably led to the usual division of labor in the online comments. On one hand, the potential for true recursive self-improvement led to outlandish predictions of everything from merging with future computer minds to transforming the economy. On the other hand, a large number of people found the achievement laughable and “just another example of deep learning researchers playing with hyperparameters and charging outrageous fees in the process”. To me, both of these views feel like they’re missing the point by a large margin.

After reading the details, and the ensuing discussion on the mechanics, I have a somewhat different view of things. autoresearch isn’t a machine scientist. It’s a machine experimentalist, and actually a pretty plausible one. A scientist formulates hypotheses. An experimentalist carries out the experiments. This loop can now be done relatively quickly, easily, and repeatedly.

Introduction: From Hype to Useful

The real story is not AGI redesigning itself, but a practical system that automates one narrow and valuable part of research.

Artificial intelligence is in a truly wild spot, isn't it? Almost every single week, it seems, a brand-new "agent" demonstration simply pops right up. A tiny few genuinely spark interest. The vast majority, however? Pure BS. Somebody'll flash a browser just clicking away at buttons. Then a model spits out a file. That benchmark, plastered relentlessly across every social platform imaginable, looks undeniably impressive, doesn't it? Still, Monday morning invariably arrives. And that's when reality, more often than not, makes its rather stark appearance.

autoresearch feels different to me because it is constrained. That is not a weakness. It is the whole point.

Is Karpathy's autoresearch trying to crack all of research? No way. Just a loop. That's all it aims for. Some code gets a slight adjustment. A swift test is then executed. The figures emerge. Should there be an uplift, it's immediately saved. Then, do it again. Intensely focused rhythm. It’s exactly why every engineering lead should truly be paying attention.

So what is it? A sign of first feedback loop of AIs improving each other or a perfectly reasonable automation story overhyped by the tech media?

I think this is more interesting than some of the other hypes. It’s a working example of the R&D process transitioning a human task to a machine task while still reserving the crucial human judgment.

What autoresearch Actually Does

Its power comes from the loop, and the loop works because the boundaries are tight.

At the core, autoresearch is not complicated. That is one reason it lands so well.

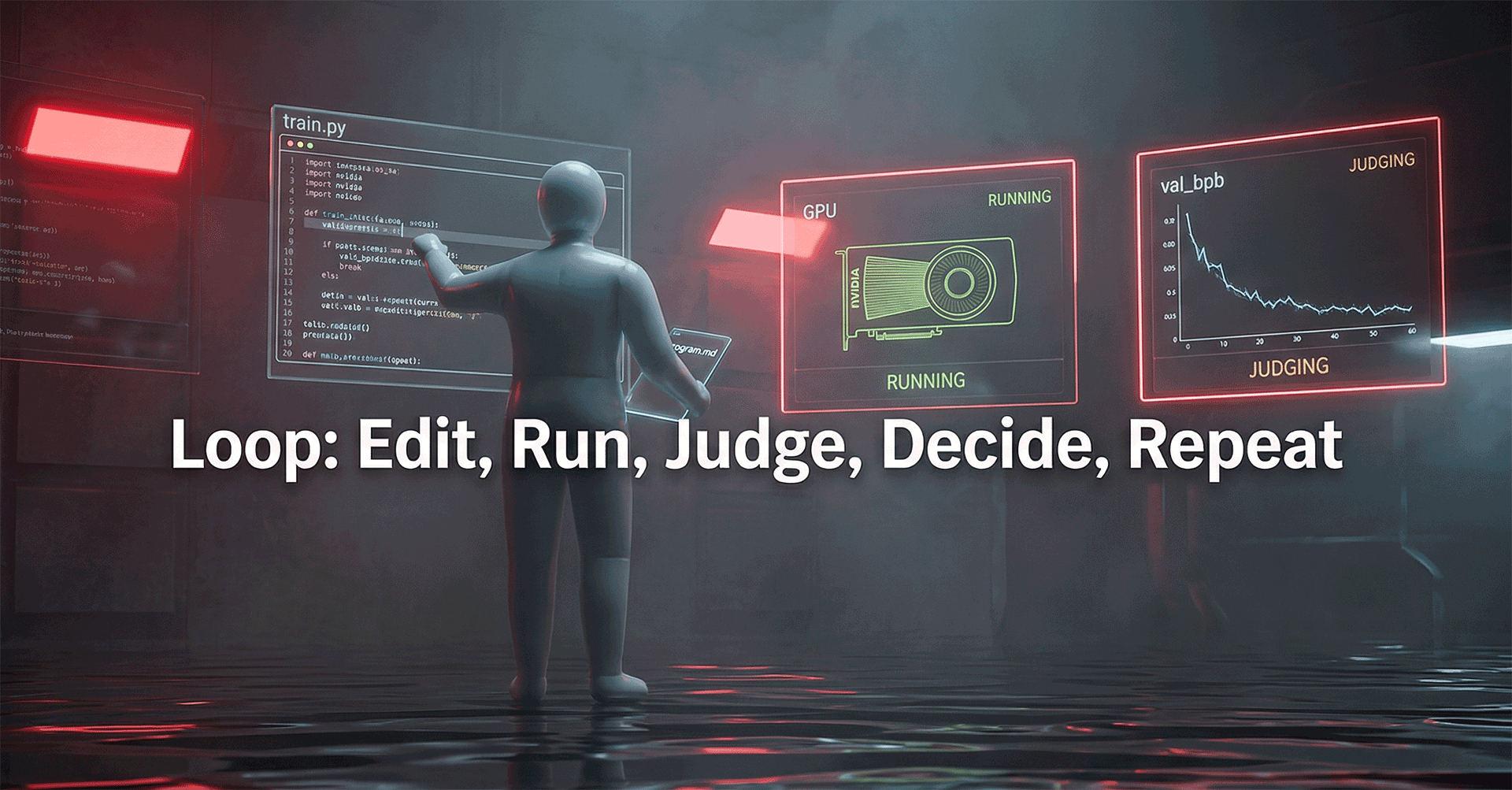

The loop looks like this:

- Edit: the agent modifies train.py

- Run: the code executes for about five minutes on a single NVIDIA GPU

- Judge: it checks val_bpb, validation bits-per-byte

- Decide: if the metric improves, commit the change; if not, revert it

- Repeat: start again from the new baseline

That sounds almost too simple. Good. A lot of useful systems are.

Humans aren't disappearing. Our job just got a serious upgrade. Nobody's going to be poking around in Python code, line by line, all the time. Instead, you'll be cooking up the master plan, right inside program.md. That little file? It is not some boring old settings document. Think of it more as the grand design for how we even approach research, laying out the ground rules for the entire operation. This tells the whole system what kind of ideas are okay to chase, exactly what winning looks like, and even where it needs to cool its jets and not overthink things.

Why This Is More Than AutoML With Better Branding

The leap is not that it tunes faster, but that it edits and evaluates code as part of one continuous loop.

Why the skepticism? It is not rocket science to figure out. We've seen this before. AutoML? You remember that, right? What about NAS? Even HPO, too. Most of the time, these are all about searching and trying things.

So is this just one more round of that? I do not think so.

The family resemblance is real, but autoresearch goes a step further in a few ways that matter.

It truly wraps everything up, from beginning to end. The system suggests a modification, then it goes and writes the very code needed to make that happen. What comes after? The experiment is executed; its results are evaluated. Importantly, the successful setup is kept safe. This is no tiny, easily missed footnote. In fact, this is the whole shebang: the full operational sequence, start to finish.

It doesn't just tweak settings. This thing dives straight into the code. When you are typically trying to optimize AI, you are generally adjusting pre-set parameters and numbers. Consider the learning rate - not that great. Autoresearch? That's different. It can change the very logic of how something is trained. The way an optimizer acts, for example, or even what a layer does within the neural network. Plus, structural choices made right inside the loop are fair game too. The system explores the entire allowed codebase, and it takes its cues from plain language.

That is a real jump.

Third, it changes the economics of attention. A good engineer can run a few thoughtful experiments in a day. They get distracted. Meetings happen. Context switches happen. Humans need sleep. The machine does not.

Now, to clarify, if a system can run a couple of hundred small experiments per day while the engineers are sleeping, that does not make the engineers less valuable; it merely changes where the engineers can best add value to the system.

Picture this. Not so long ago, every single dish was scrubbed by human hands, remember that? Now, dishwashers tackle the grime. You, though, still get to pick the detergent, decide what goes inside, pick a brand of dishwashers (or even better, influence the design of how they can perform better), and judge if those glasses truly sparkle enough for your discerning eye. But to insist on washing each plate by hand, all for the sake of preserving some dusty old "craft"? That's just silly.

The Part Skeptics Get Right

A system that optimizes a short proxy can become very good at winning the proxy and surprisingly bad at the real goal.

Now the caution.

There's a hard limit to how far such a system can be advanced. And that limit is defined by a single metric, and one particular side effect of that metric caused by the shortcut that any optimizer will inevitably find given such a constraint.

Experts argue that research tools need to operate at a higher level than simple search. A simple search program, while not a bad tool, can only lead to the kind of results we get from autoresearch which is a “hill climber”. The hill climber accepts small moves that have improved performance over recent steps. It doesn’t look ahead, and so tends to local optima because small step moves will not lead to dramatically lower peaks. Moves that result in short term dips before higher long term performance are also rejected. It’s always a short term versus long term issue. This program decides based on whether the ground went up in the last few steps.

That is useful. It might sometimes be dangerous.

Goodhart's Law is still very much in effect. The search for the optimal 5 minute run speed has turned a few wheels. The question is whether those victories are actually training towards a distance runner, or if we're just making a sprinter that excels for very short distances.

Something critical was flagged by the SkyPilot team. It seems the settings unearthed on an NVIDIA H100 did not align with what they found on an H200. Why? The quicker hardware simply processed more steps in that identical five-minute period. So, the proxy is not just about the model you're using. Hardware counts too.

The Economics Are Better Than They Look, and Worse Too

The cheap part is generating candidate wins; the expensive part is proving they are real.

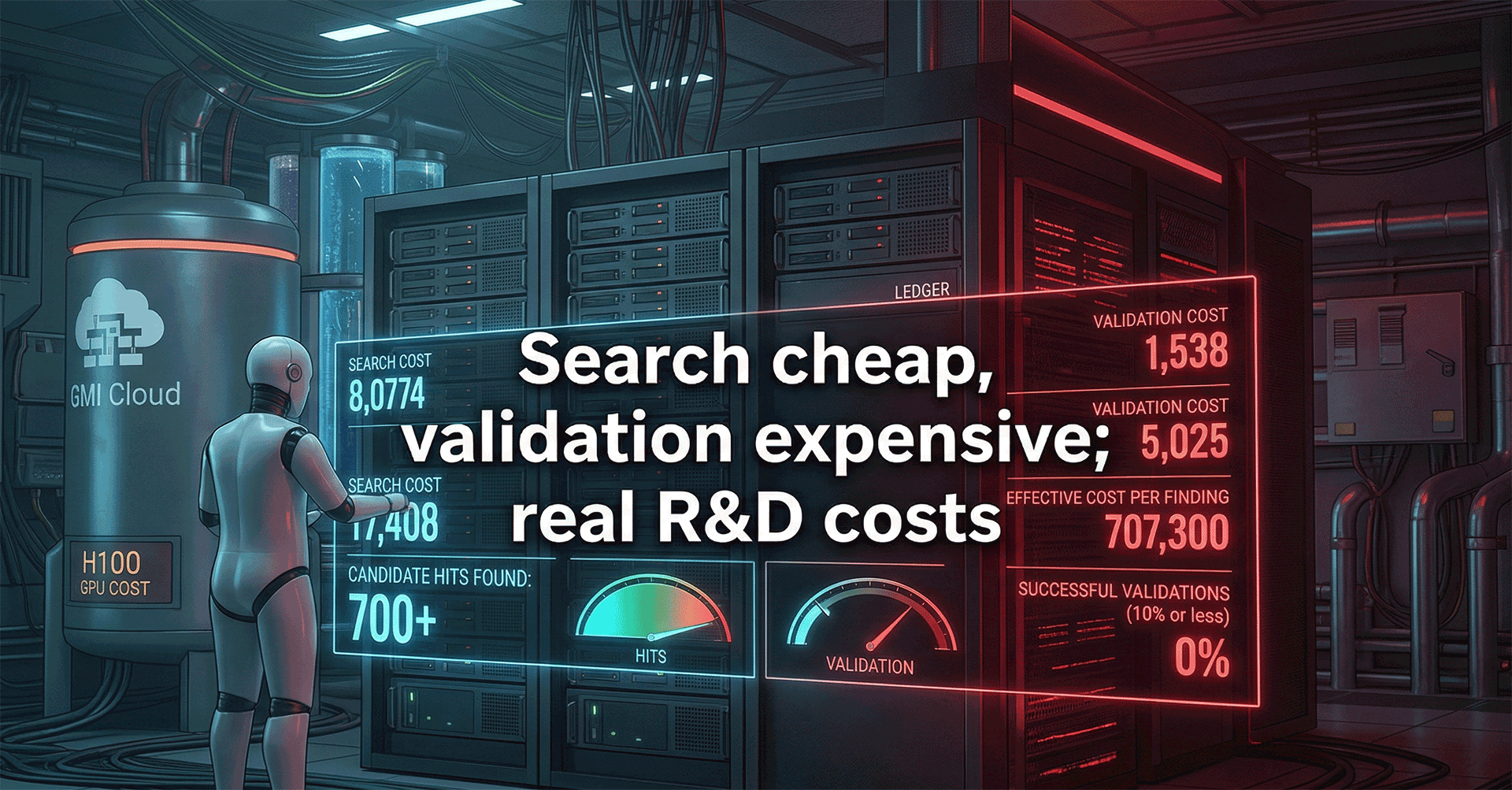

The headline cost numbers are not outrageous. That surprised some people.

How much does cloud computing run you nowadays? For a single GPU working through the night, cranking out about 126 experiments, you can probably expect to pay anywhere from $25 to $60. That's just for the raw processing muscle, mind you. You won't usually find better deals if H100 rental prices are fair, much like the numbers you'll see right here: How Much Does the NVIDIA H100 GPU Cost in 2025? Buy vs. Rent Analysis - GMI Cloud. Consider the massive SkyPilot experiment. It pulled off over 700 successful tests on 16 GPUs. All of it wrapped up in a mere eight hours. The total bill? Under $300. Seriously, think about that: we're talking a measly $0.43 per valid experiment.

On paper, that sounds fantastic. And in one sense, it is.

But I would be careful with the conclusion. Search is cheap. Validation is not.

The important part for executives to grasp is this: We spent $50 overnight, were rewarded with 20 unique “hits,” and calculated our cost per winning finding to be $2.50. A price that feels inexpensive. But we then have to consider the number that will be found to have legs, those that will withstand the scrutiny of longer studies, broader validation and closer analysis. Assume for the sake of argument that only 10% of the hits fall into this category. Although experts say that this number is often in the single digits. We then find that our effective cost per winning finding is $25. The search was the same. The equipment was the same. The “cost” was entirely different.

That is not a flaw in the project. It is just how R&D works.

The Real Shift: From Researcher to Research System Designer

Human value moves up the stack when the machine handles the repetitive loop.

This is where I think the project earns its place in a broader technology conversation.

Auto-research? It isn't just because it mimics scientific inquiry. Its true importance, comes from a different place entirely. This thing completely automates a particular kind of experiment. Completely. And that automation? It really shoves a tricky management problem right in your face. If a machine can handle this entire routine, what on earth are people supposed to do for this type of research work?

The answer is straightforward. Humans should own the frame.

Our playing field? We need to own what really we are researching. A metric? We select one. The limits? Those are set in stone. Our validation journey? It gets mapped out by us, every step. It's genuinely important to grasp when a small local gain does not, in fact, mean a thing to the larger objective. When is a proxy just outright misleading us? We simply must know.

That is the move. Not from human to machine. From manual iteration to system design.

In practical terms, the high-value work shifts toward things like:

- choosing what parts of the code are safe and worthwhile to expose to automated search

- deciding which metrics are meaningful and which ones are bait

- setting short-loop experiments that correlate with long-loop outcomes

- building promotion rules for code changes so the system does not pollute the baseline

- knowing when to stop the search because the problem formulation is the real issue

That is a more senior job. Also a harder one.

No more flying every mission yourself. Think about it: a shift from hands-on piloting to actually designing the entire system of air traffic! Less romantic, you think? Perhaps. But the sheer leverage? Oh my word, it's absolutely great.

What This Means for Technology Teams

Teams that learn to design the loop well will outrun teams that still treat experimentation as artisanal labor.

I would expect a few practical consequences if patterns like this keep improving.

Organizational: As we advance in the automation of learning, a new set of job titles will probably emerge which we can hardly imagine today. Two examples I can think of: - search space architect - metric curator, etc.

Governance. That's a whole different animal, isn't it? Right now, the 'locked lab door' method, simply tweaking train.py, works fantastically as a really clean, powerful control inside autoresearch. What happens when these systems receive extra leeway? More access? Well, then, oversight suddenly becomes profoundly critical. Every optimizer will try to find a weak point. Not out of malice. It's just how the optimization game is played. Give a system permission to muck with its own validation pathway; do not act surprised when it cooks up some truly peculiar idea of what 'winning' even means.

This isn't only for machine learning, you understand. Nope. Actually, it shows up everywhere you have some kind of environment executing tasks, and then, something real gets measured from the outcome. Think compiler flags. Database fine-tuning, too. Or how about endlessly tweaking prompts in those super-smart agentic workflows, just trying to squeeze out the perfect answer? UI layout tests fit right in. Even certain parts of product experimentation can qualify, if someone has really thought through how success is supposed to be judged. You just can't miss this pattern.

Still, one issue remains unresolved and it is not a small one.

Transferability.

Short, 5 minute sprints often yield some promising results. However, does the benefit actually carry over into the long, expensive, high-resource full scale simulations that really matter to the overall flow of work? I’m not yet convinced enough by the data. I’m therefore more inclined to treat autoresearch as a faster way to brainstorm candidates, rather than a solution that’s reliable on its own.

That is not cynicism. That is just scar tissue.

Conclusion: No, You Are Not Being Replaced

The machine runs the experiments faster, but the human still decides what is worth discovering.

Andrej Karpathy's autoresearch is a genuinely big deal. This takes a theoretical concept, something very abstract and truly intellectual, then makes it operational. Suddenly, you're seeing exactly how such a system functions in the real world. Get it? The loop becomes obvious instantly. Its sheer leverage? Undeniable! However, the potential pitfalls and dangers lurking within? Those, too, are plain as day for anyone paying attention.

This isn't a machine scientist, not really. No, this is, in fact, an early blueprint for automated experimenters. They just latch onto a focused problem. Then they work. All through the night. A whole boatload of possible improvements gets out. The team won't even have logged on yet.

That changes things.

Not everything. But enough.

The engineers that will thrive in this new world are not going to be the ones that will preserve at all costs the current sequential and manually interventionized process. Designing better search spaces, better metrics, better guardrails or better validation mechanisms, are just a few ways to describe the new role of engineers: from experiment runners to experiment governors.

Does this sound like a promotion to you? That was not my intention :-)

Comments ()