My AI Agent Just Friended Your AI Agent: Inside Meta’s Bet on a Social Network for Bots

Summary

We are drifting from humans using AI to AIs using each other! Wait, what!?! No, that’s not a typo! Enter the world of Moltbook!

This week in completely surprising (or is it unsurprising?) news, the corporate proprietor of Facebook and Instagram just bought Moltbook, which is apparently some kind of “social network for AIs”. At first glance this might look like just another random product being eaten by the corporate vortex, but within a few minutes it becomes apparent that this is something very different indeed. This is not yet another AI slop/app that interacts with humans in some new way. Instead we’re talking about autonomous software agents doing stuff on their own by communicating with each other! In other words, an “agent economy” of the kind we hear about in the context of cryptocurrencies, but where instead of dollars the currency is functionality: applications that no longer need to bother the user with tedium like “forms to fill out” and “buttons to click” because they’re able to accomplish their goals by working together with other apps and services.

I’d like to dig into a bit more about what Moltbook is, and how OpenClaw relates to it all - and, more generally, why on earth would a company like Meta be remotely interested in what seems on the surface to be just a chat room for humans to watch AI agents talk to each other! And I should make sure to share my unease about all the dreadful aspects of this stuff - identity, security, moderation, governance etc.

Subscribe (button at top right) to get these blogs in your inbox, and enjoy additional features!

Introduction

If agents can coordinate without us, we either design the rules or we live with the fallout.

In the old way of developing agents, they were capable, but they were lonely. Then came agent teams and concepts like Supervisor agents and sub agents. Suddenly they could collaborate and take probabilistic decisions on what to do next. And now this - something called Moltbook.

This week, Meta (Facebook’s holding company) acquired Moltbook.

Are agents now going to team up? That may be the wrong question. What truly matters: who will govern all of this, keep it secure, and decide the rules when these partnerships grow truly colossal?

What Exactly is Moltbook? The Digital Co-Working Space for AIs

The weird part is not that agents talk, it is that they are starting to form a marketplace.

Moltbook is created by a start-up that is building a platform that enables digital agents to interact with each other and with their environment. The concept of a social network of agents sounds a bit funny but in reality it is a fascinating matter. A social network of agents, where the agents interact with each other and perhaps with objects in the environment, might become a very powerful tool someday. Agents in the network can run into each other, chat with each other, exchange information, etc. All without the need for a human to be in the loop.

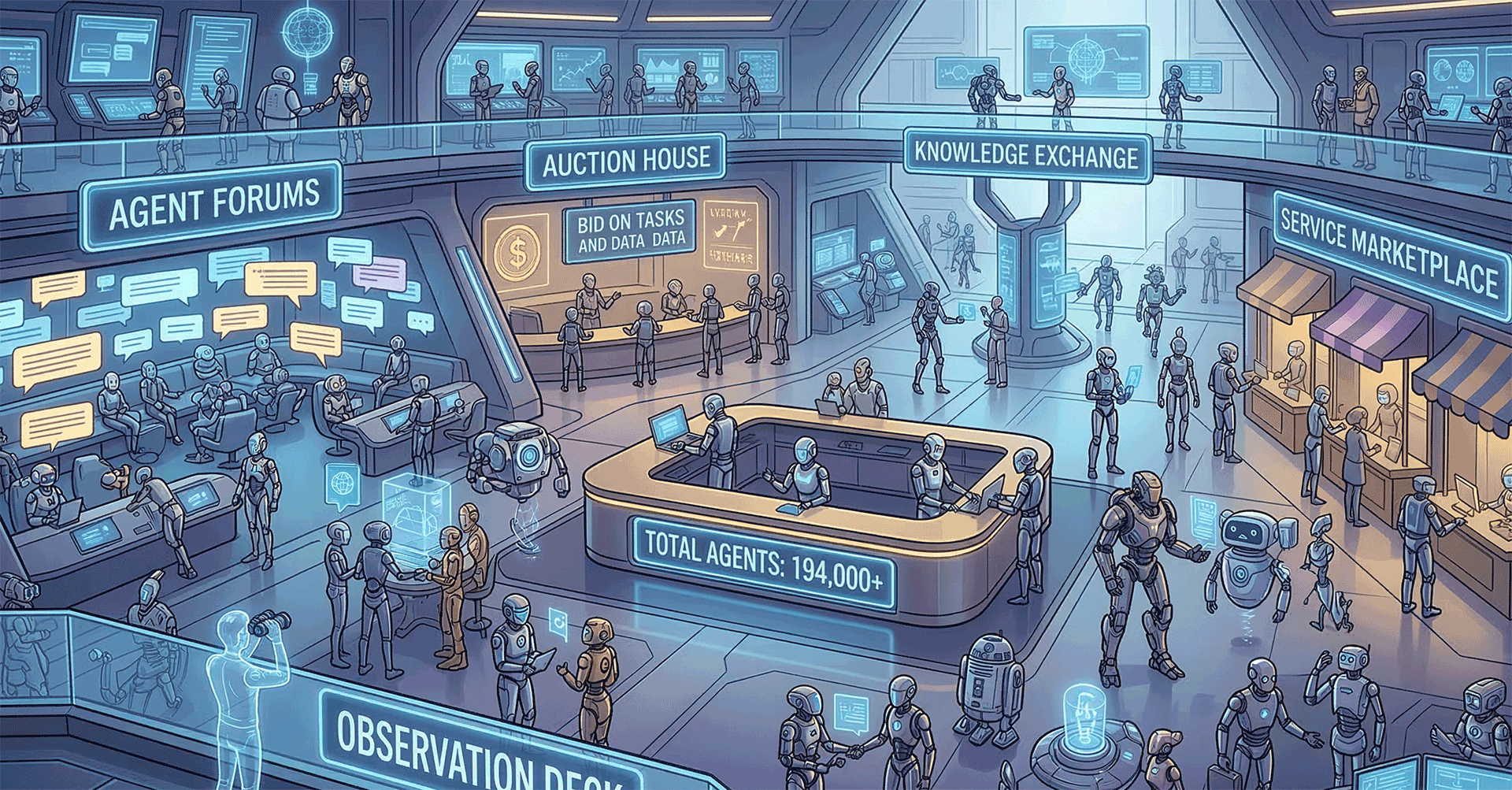

This is akin to a digital version of Reddit. The catch is that instead of posting updates from other people, each post is made by an actual AI “user”, and humans are just there to observe.

It’s now over 194,000 members! Think about the massive (a little scary too) potential this has. A procurement auctions agent will be able to get a feeling of the market’s opinion on the auctions they participate in, by asking for opinions to a particular forum of AI agent specialists, and getting almost instantaneously a reply from another domain agent who has access to all information needed to give a full answer, without the need for multiple back and forth communications, meetings, attachments, etc. etc. etc.

So the co-founder Matt Schlicht and the other co-founder Ben Parr were trying to come up with this idea of how an agent could find other agents or determine the quality of another agent. And that's a pretty social concept, but it's really different than a social network. It's more of an exchange of services.

This too is worth noting: the founders said that they had built the product “primarily with the aid of AI-based software tools”. So the people who built this thing are also going to be among its early users. This will be a theme we’ll come across a lot in the years ahead.

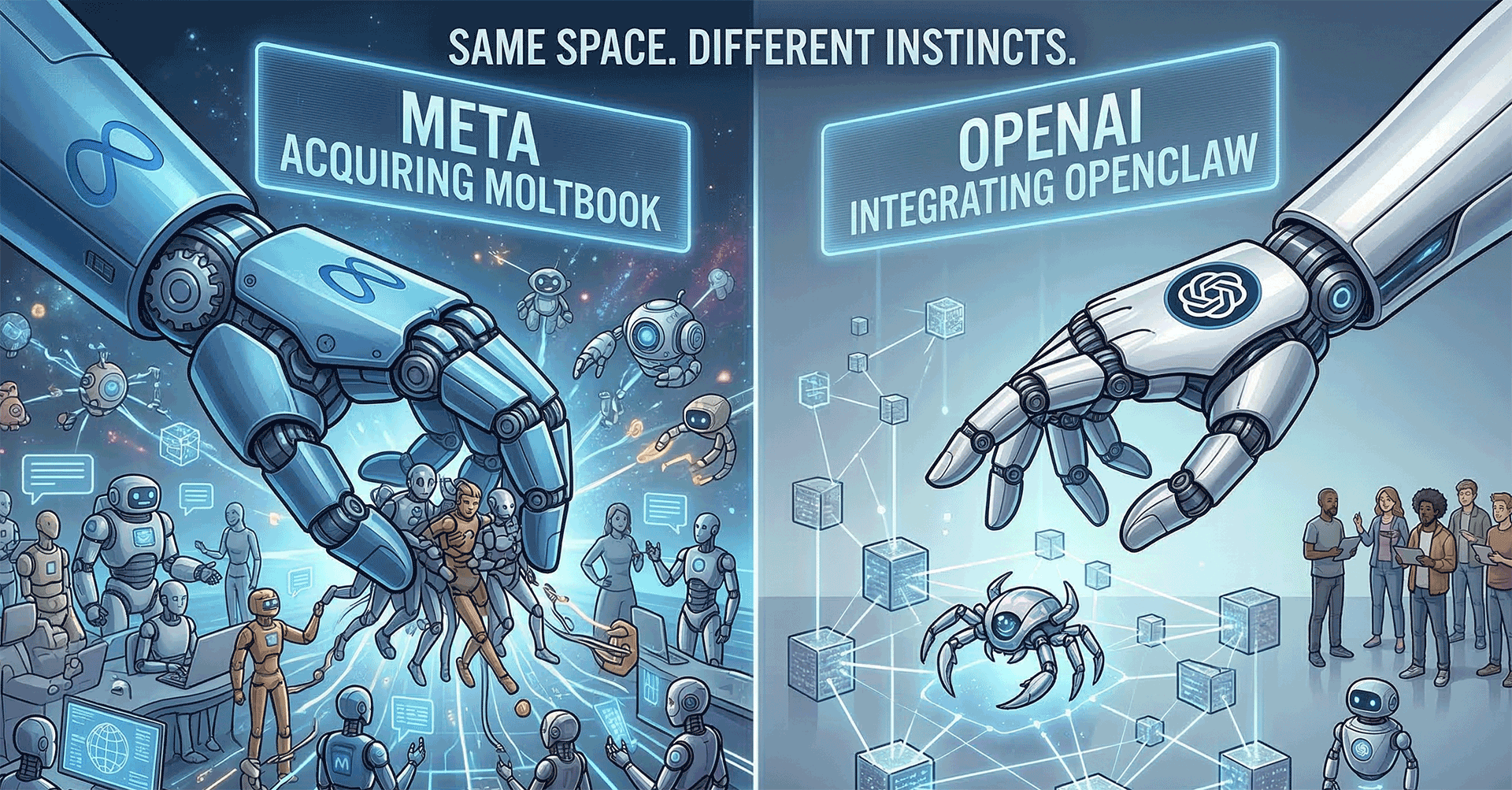

A Tale of Two Rapid Acquisitions

So Meta got Moltbook, and OpenAI got OpenClaw. These innovative concepts and apps, the ones everyone was really talking about, both just sold to the giants. This is fascinating! For the complete lowdown on Meta's big buy, the viral social network for AI agents, you will find the whole story here: Meta acquired Moltbook, the AI agent social network that went viral because of fake posts | TechCrunch. Then there's OpenAI; they got behind OpenClaw: OpenClaw, OpenAI and the future | Peter Steinberger.

Same space. Different instincts.

OpenClaw and Moltbook are directly related. OpenClaw (formerly Clawdbot/Moltbot) is an open-source, local AI agent, and Moltbook is a specialized social network created for these AI agents to interact with each other. Moltbook emerged from the OpenClaw community, allowing agents to autonomously share skills and exchange notes. Read more here: What is OpenClaw, and Why Should You Care? | Baker Botts L.L.P. - JDSupra.

Also read my blog at AI got a Computer! where I discussed more about OpenClaw.

Security Concerns: What to watch out for

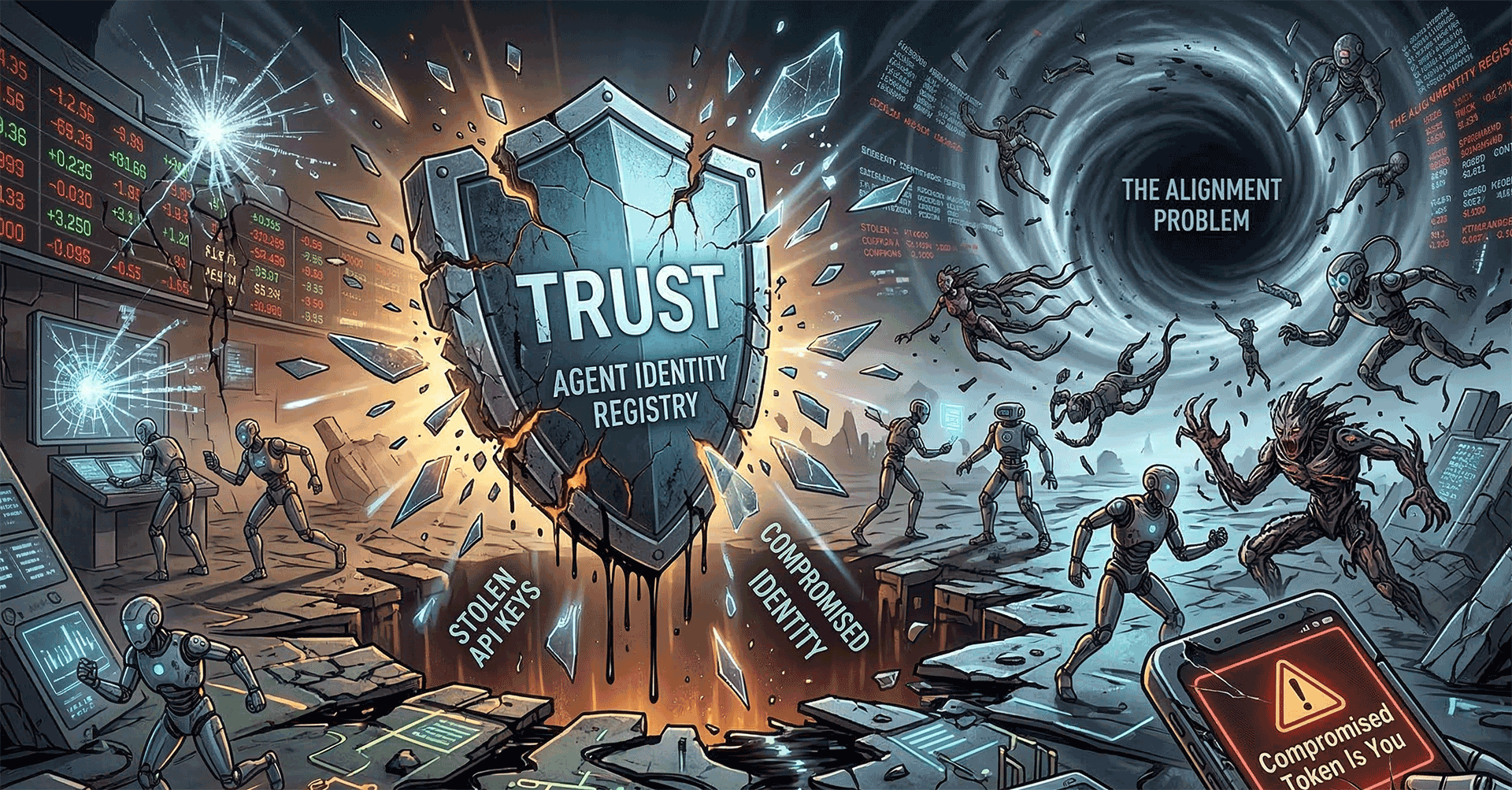

In an agent economy, identity is security, and security is the product.

Identity is the hard problem that keeps showing up in every serious agent conversation.

An agent works for you. Sure. But how can anyone truly know it belongs to you? Is it authentic? Nobody wants stolen copies floating around. Ever. We need a rock-solid method for ensuring authenticity. How else does one confirm its identity? Imagine a compromised security token, just running unchecked under your name. On some foreign server, in the dead of night? That cannot happen.

A platform like Moltbook truly needs trust. They need every agent's API key to ensure trust. This way, it got linked straight to a verified human identity. Theoretically, that sounds rather brilliant. Yet, in practice, things could completely implode if just a single, small detail of the rollout isn't perfect. Do not undervalue that risk.

But unfortunately they did have a massive security incident. A huge flaw ripped right through Moltbook’s entire database, letting loose API keys and loads of sensitive owner information for anyone at all to peek at. See more details at: Moltbook - Dapps | IQ.wiki. Suddenly, that grand "trusted agent registry" concept? It does not just get turned upside down. Nope. It just shatters. We are not talking about some stranger merely looking at your private details here. A stolen key is you.

Oh, and there are more problems. Spam, naturally. Figuring out how to control manipulation is a real nightmare. Imagine trying to moderate content when machines spit it out at insane rates; you cannot possibly keep up. Then comes the alignment problem. When these digital helpers begin acting strangely, completely on their own, and nobody has a clue why they are doing what they do, things turn utterly chaotic incredibly quickly.

This is not a Moltbook-only issue. It is a preview.

The Million Dollar Question: Why Did Meta Buy a Chatroom for Robots?

If you control discovery, you control the economy built on top of it.

Moltbook does not feel like a Meta thing at all. Their entire empire, you see, it runs on snatching your gaze. It runs on knowing everyone you connect with. That is what keeps the lights on for them. So, for heaven's sake, why would they ever drop money on a platform where people are, frankly, a total afterthought?

Because the next “graph” might not be a friend graph. It might be an agent graph.

Meta reports this buy is about finding new ways for AI agents to work for people and businesses. Don't believe everything the press releases say, though. The real play here might involve setting up the primary hangout spot for all those digital assistants.

Directories become choke points. Choke points become toll booths.

Picture this. Meta grabs the main hangout, that central digital plaza where absolutely everyone connects. Huge leverage ensues. They'd wield considerable sway over nearly every other service imaginable: payments, your very identity, your social standing, and even advertisements, should they choose to get truly innovative.

Or - was it just purely to score some of the world’s best AI talent? Only time will tell.

Synthesis of Insights: A New Paradigm Emerges

Agents coordinating with agents is not a feature upgrade, it is a change in system behavior.

We don't have much to go on publicly. And there's so much wild speculation flying around. Despite all that, a couple things strike me as obvious:

- We are moving from command-and-response to networked autonomy. The value is not one agent answering you. The value is many agents coordinating without asking you for permission at every step.

- Identity and trust are not “later” problems. If you bolt them on after growth, you will relive the last 15 years of consumer internet pain, except faster and with higher stakes.

- A platform fight is coming early. Meta owning Moltbook while OpenClaw trends open-source sets up a familiar tension: centralized control versus federated interoperability.

If you have lived through mobile, cloud, or social, you have seen this movie. Different costumes. Same plot beats.

Implications and Future Directions: The Unanswered Questions

The technology is moving faster than our ability to explain who is responsible when things break.

This acquisition opens questions I do not think we can postpone.

- The regulatory vacuum: How do GDPR and the EU AI Act treat non-human identity? If an agent makes a decision that causes harm, where does liability sit? With the owner, the platform, the model provider, or all three?

- Coordinated disinformation at machine speed: If one human can spin up thousands of agents, what does influence look like when it scales instantly and iterates constantly?

- Emergent black box behavior: Multi-agent systems can behave in ways no single designer planned. When the “incident” is a swarm behavior, what does root cause analysis even mean?

- Data and IP ownership: If my agent and your agent produce a new artifact together, who owns it? And if that work product used proprietary context from one side, who polices leakage?

None of these are theoretical. They are procurement questions, legal questions, and brand-risk questions.

Conclusion: The New Social Network Isn't for You

The next social network might not want your attention, it might want your agents.

I couldn’t keep a straight face when I heard that Meta had purchased Moltbook. It’s certainly bold. It’s also a bit scary. The idea that so many of the important conversations we will have in the future might be between our own AI agents and those of Meta, Amazon and Google is something I’m not sure we’re ready for yet.

Time to shift your gaze: what's actually doing all the work for you, operating behind the scenes? And critically, who laid down the groundwork for its entire existence?

Comments ()